After Typhoon Kalmaegi brought torrential rains and flash floods across the central Philippines, an AI-generated picture began spreading online. The image was falsely described as showing the true aftermath of the storm’s devastation. It was later identified as content produced using Google’s artificial intelligence tools, traced to an account known for sharing computer-generated visuals.

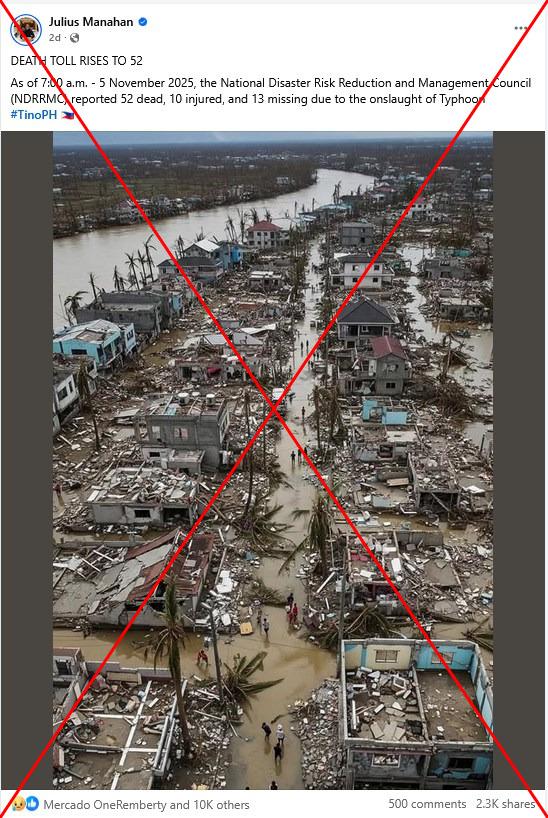

One viral Facebook post from November 5, 2025, included a bold caption claiming, “DEATH TOLL RISES TO 52.” It further alleged that the figures came from the National Disaster Risk Reduction and Management Council (NDRRMC) and referred to the storm by its local name, Typhoon Tino. The image depicted shattered homes, flooded neighborhoods, and uprooted trees—scenes that evoked real suffering but were not authentic.

Typhoon Kalmaegi, one of the most destructive storms to strike the Philippines this year, left a severe trail of damage. The confirmed death toll has reached 188, with 135 others unaccounted for. Although the storm caused catastrophic flooding, the viral photo does not represent genuine disaster footage.

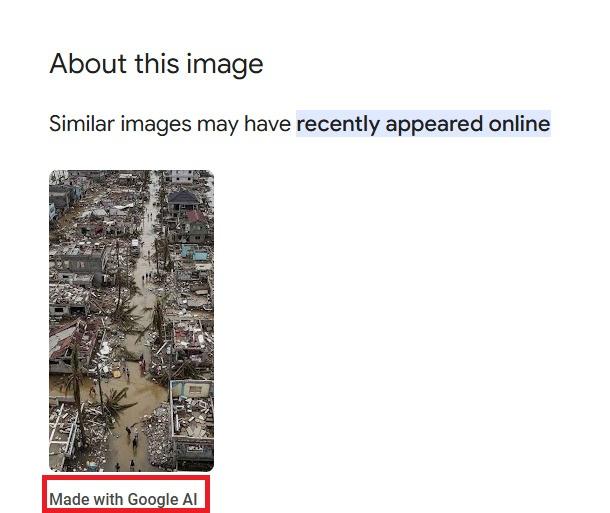

The misleading image also circulated in Thai and Filipino social media circles. Its realistic appearance made many believe it was a true photograph from the disaster area. However, Google’s reverse image search flagged the content as “Made with Google AI,” confirming its artificial origin.

The detection relied on Google’s SynthID watermarking system, launched in 2023 by the company’s DeepMind research team. This technology embeds invisible markers into AI-generated images, allowing fact-checkers to trace their source. Without such detection tools, fabricated images like the one linked to Typhoon Kalmaegi could easily mislead the public.

A search using related keywords uncovered an earlier Facebook post dated November 4. It featured the same image but included a disclaimer explaining that it was “AI-generated and intended for educational awareness about climate impacts.” The post also urged users to rely on verified updates from agencies like PAGASA, NDRRMC, and other local authorities during weather emergencies.

The creator’s profile clearly mentioned their specialization in producing AI-generated visuals. Upon closer inspection, the image displayed telltale flaws common in AI artwork—figures with distorted limbs and unnatural shadows. Such inconsistencies often expose synthetic imagery, even when the visuals appear realistic at first glance.

AFP has repeatedly exposed similar cases in which AI-generated disaster photos were circulated as real. These incidents highlight the growing challenge of misinformation fueled by artificial intelligence. With Typhoon Kalmaegi in the Philippines now part of this trend, awareness and media literacy are more important than ever to prevent false visuals from spreading further.